Run AI Chatbots on Your Phone in 2026: No Internet, No Cloud, No Account

You can run Gemma 4, Llama 3.2, Qwen 2.5, Phi-4, and Dolphin on your phone right now. No command line. No Python. No cloud. Here's what works, what you need, and what you can actually do with it.

You've probably heard that you can run AI models on your phone now. Maybe you saw a demo on social media, or a tech article mentioned it. But when you tried to figure out how, you found instructions involving Python, pip install, llama.cpp compilation, terminal commands, and GGUF file downloads from Hugging Face.

You closed the tab.

Here's the version without the command line: in 2026, you can download an app, tap a button to download a model, and start chatting with a local AI that runs entirely on your phone. No internet after the initial download. No account. No cloud. No terminal.

This article covers what's actually possible, which models work, how much phone you need, and what you can realistically do with pocket-sized AI.

What "Running AI on Your Phone" Actually Means

When you use ChatGPT or Gemini, your question travels over the internet to a data center full of GPUs. The model processes your question there and sends the answer back. You need internet. You need an account. Your question is logged.

Running AI on your phone means the entire model lives on your device. Your phone's processor handles the computation. The question goes from your keyboard to the model to the screen. No network involved. No server. No external dependency.

The format that makes this work is GGUF (GPT-Generated Unified Format). It's a file format optimized for running quantized AI models on consumer hardware. A GGUF file is essentially a compressed brain that your phone can load and run.

Quantization is what makes large models fit on small devices. A model that would normally require 16 GB of memory gets compressed to 4-bit precision, reducing its size by roughly 4x while keeping most of its intelligence. A 3 billion parameter model quantized to Q4_K_M fits in about 2 GB.

What Models Can You Run Right Now?

Here's what's available today, with real-world specifications:

Small Models (4 GB RAM phones)

| Model | Download | Quality |

|---|---|---|

| TinyLlama 1.1B | 638 MB | Basic. Answers simple questions. Fast responses. |

| Llama 3.2 1B | 768 MB | Better than TinyLlama. Good general knowledge. |

| Dolphin 1B | 770 MB | Same size as Llama 1B but answers questions that standard models refuse. |

Mid-Range Models (6-8 GB RAM phones)

| Model | Download | Quality |

|---|---|---|

| Qwen 2.5 1.5B | 1 GB | Good multilingual support. Solid quality for size. |

| Gemma 2 2B | 1.6 GB | Clean, well-structured responses. |

| Llama 3.2 3B | 1.9 GB | Sweet spot for most people. Noticeably smarter than 1B. |

| Qwen 2.5 3B | 2 GB | Best multilingual option. |

| Phi-4 Mini | 2.3 GB | Strong reasoning and math for its size. |

| Gemma 3 4B | 2.3 GB | Excellent instruction-following. |

Large Models (8 GB+ RAM phones)

| Model | Download | Quality |

|---|---|---|

| Dolphin 8B | 4.6 GB | Full uncensored model. Answers everything. |

| Llama 3.1 8B Abliterated | 4.6 GB | Llama with safety filters removed. |

| Hermes 3 8B | 4.6 GB | Best instruction-following at this size. |

Vision Model (10-12 GB+ RAM phones)

| Model | Download | Quality |

|---|---|---|

| Gemma 4 E2B | 1.5 GB total | Understands images. Analyzes photos. Requires 10 GB+ RAM. |

| Gemma 4 E4B | 2.7 GB total | Better image analysis. Requires 12 GB+ RAM. |

Flagship (12 GB+ RAM)

| Model | Download | Quality |

|---|---|---|

| Gemma 3 12B | 6.8 GB | Near-desktop quality. Best on-device model available. |

How Smart Is a Phone AI vs. ChatGPT?

Honestly: less smart, but smarter than you'd expect.

A 3B parameter model (like Llama 3.2 3B) handles these well:

- General knowledge questions

- First aid and medical guidance

- Cooking and recipe help

- Writing assistance (emails, summaries, explanations)

- Math and calculations

- How-to instructions

- Language translation

- Study and learning

It struggles with:

- Multi-step complex reasoning

- Advanced coding tasks

- Very nuanced or ambiguous questions

- Tasks requiring current information (its knowledge is frozen at training time)

The 8B and 12B models close most of the gap. A Gemma 3 12B running on a flagship phone provides answers that would surprise people who assume "phone AI" means a basic chatbot.

Beyond Chat: What Phone AI Actually Does

Running a model on your phone isn't just about chat. Here's what's practical:

Document Search (Ask The Books)

Import PDF, EPUB, or DOCX files. Ask questions across all your documents. The AI searches your library, finds relevant passages, and gives you a sourced answer. Study tool, reference tool, research tool.

Image Analysis (Gemma 4)

Take a photo. The AI describes what it sees. Works for identifying terrain, analyzing environments, understanding unfamiliar objects. Requires 10 GB+ RAM.

Private Queries

The killer feature: no one sees your questions. Not the AI company. Not your ISP. Not Google. Medical questions, legal questions, financial questions, personal questions. All processed locally, never transmitted.

Offline Reference

Once downloaded, the AI works forever without internet. On a plane, in a basement, in the wilderness, in a country with restricted internet. The model's knowledge is baked in.

The Uncensored Option

Standard AI models are trained to refuse certain questions. "I can't help with that" is a familiar response if you've asked ChatGPT about anything it considers sensitive.

For most people, this is fine. But some people have legitimate reasons to want AI without guardrails:

- Researchers studying sensitive topics

- Writers creating realistic fiction involving violence, conflict, or controversial themes

- Professionals who need direct answers about chemical processes, mechanical systems, or medical procedures

- Anyone who believes they should decide what questions they're allowed to ask, not a corporation

HAVEN's Tactical Engineering category includes models specifically tuned for unrestricted access:

- Dolphin (by Cognitive Computations): Available in 1B, 3B, and 8B. Fine-tuned to minimize refusals.

- Abliterated Llama: Meta's Llama 3.1 8B with the refusal mechanism surgically removed.

- Hermes 3: Nous Research's model optimized for complex instruction-following without artificial constraints.

Dolphin 1B runs on virtually any phone (4 GB+ RAM, 770 MB download). You don't need a flagship device for unrestricted AI.

How to Get Started (No Command Line)

Here's the entire process:

1. Download HAVEN from the App Store or Google Play (free)

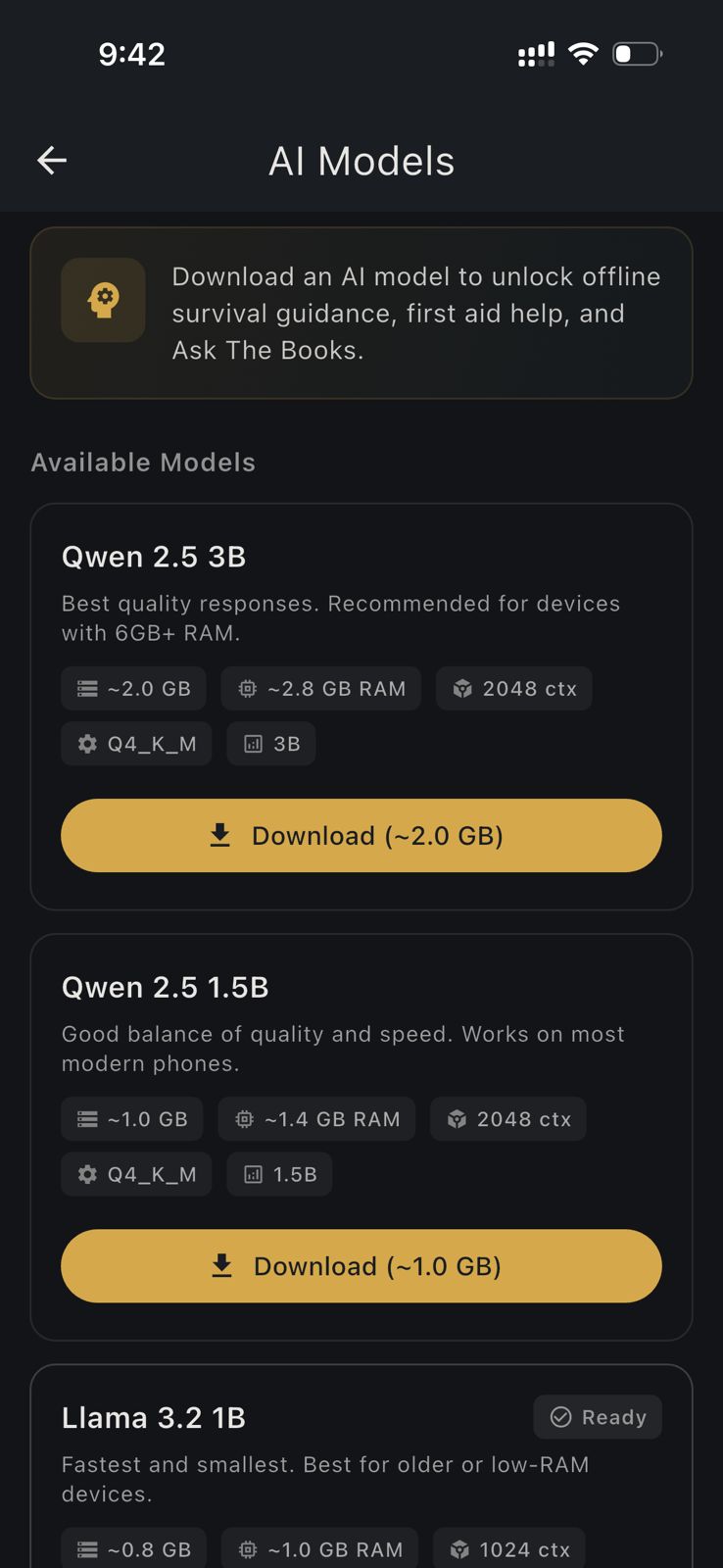

2. Open the app. Go to Settings, then AI Models

3. Browse the model list. Each model shows its download size and RAM requirement

4. Tap Download on a model that fits your phone

5. Wait for the download to complete (1-7 GB depending on model, use Wi-Fi)

6. Go back to the main screen and open AI Chat

7. Start typing

That's it. No Python. No pip install. No terminal. No Hugging Face account. No GGUF file management. No llama.cpp compilation. No command line flags.

The model downloads, loads into memory, and you chat. If you want a different model, download another one and switch. You can have multiple models installed and switch between them.

What You Need

Minimum: Any phone with 4 GB RAM and about 1 GB of free storage. This runs TinyLlama or Llama 3.2 1B.

Recommended: A phone with 6-8 GB RAM and 3-5 GB free storage. This runs the mid-range models (Llama 3.2 3B, Phi-4 Mini, Qwen 2.5 3B) that provide genuinely useful answers.

For vision/image AI: A phone with 10-12 GB+ RAM. This runs Gemma 4, which can analyze photos.

For maximum quality: A flagship phone with 12 GB+ RAM. This runs Gemma 3 12B, the best on-device model available.

Free vs. Pro

HAVEN's free tier gives you 15 AI messages per day with any downloaded model. No account needed.

Pro ($24.99 one-time, not a subscription) gives you:

- Unlimited AI messages

- Access to all models including tactical engineering

- Import your own GGUF models from Hugging Face

- Plant ID, Environment Scan, and other Pro features

The free tier is enough to try it and see if on-device AI meets your needs. Pro is for people who want to use it as their daily AI tool.

The Bottom Line

Running AI on your phone isn't experimental anymore. It's practical, private, and available right now. The models are good enough for real use. The phones are powerful enough to run them. And the setup has been reduced from "compile llama.cpp from source" to "download an app and tap a button."

If you've been curious about local AI but didn't want to deal with the technical setup, this is the version that's ready for everyone.

Ready to get prepared?

Download HAVEN free and start your preparedness journey today.